Over the next ten years, major progress needs to be made in the area of conventional computing in order to keep pace with the requirements of new applications. High Performance Computing (HPC) is one option to amplify the capabilities of computing technologies. In this sector, large R&D investments have been made in recent years. In their interview with RealIZM, Rolf Aschenbrenner and Michael Töpper speak openly about their view of the industry and expectations for the future.

What is the difference between ‘normal computers’ and high-performance computers?

Rolf Aschenbrenner: From a scientific point of view, High Performance Computing is the next stage or version of computers based on von Neumann architecture, where we can get extremely high-performance systems. HPC includes e.g. technologies like quantum and neuromorphic computing. A quantum computer is a computing device that uses quantum phenomena (quantum superposition, quantum entanglement) to process data, while a neuromorphic computer is a device that uses very large-scale integration systems containing electronic circuits and emulates the neural structure of the human brain. There is also another term for it: ‘next-generation computing’.

Michael Töpper: From an application point of view, activities like video calls, presentations, and online banking can be done on normal computers, based on von Neumann architecture. We do not need quantum computers to make a call. For other and newer applications, like autonomous driving for example, we do need higher computing power and larger bandwidth for data connections. Other high-performance computer architectures, like neuromorphic or quantum-based systems, will be solutions for very specific applications in the future, like weather simulation models or simulations for new pharmaceuticals.

What concrete technological features are we talking about with the ‘supercomputers’?

Michael Töpper: A good example is the story of the digital camera. It is incredible what has happened to the quality of digital photos over the last few years. Digital videos from old video recorders had awful quality, because rendering photos and videos requires a lot of computing power. Today, we not only take high-resolution photos and videos with our smartphones, we can even edit them then and there. That was unimaginable ten years ago, when not even notebooks were able to do so.

Rolf Aschenbrenner: In this example, we need to pay close attention to the term computing power. When we talk about streaming for example, we do not need lots of computing power, but lots of bandwidth. But when we talk about autonomous cars, computing power is indeed paramount – in the sense of quantifying the speed at which certain operations can be performed on a computer. The power of a conventional computer is often measured in transfer rates. The power of quantum computers is about the number of qubits and the number of calculations in a certain unit of time.

What is the status quo in the field of High Performance Computing?

Rolf Aschenbrenner: High Performance Computing is too vast a field to give you a single answer. High Performance Computing in terms of conventional computer architecture is happening mainly outside of Europe. As far as edge computing is concerned, which includes e.g. high-performance computers in cars, Europe is in a better position, because a lot of the major car manufacturers and their suppliers are located here.

The first quantum computers were developed in North America, while Europe plays a bigger role only in the development of some individual technologies that are part of the whole system of quantum computers.

Michael Töpper: Unfortunately, there are no technology giants in Europe that are willing to invest what is needed. But there are companies that used to develop other high-performance systems. They have perfected their systems to such an extent that they can now be used for autonomous driving. For instance, the data that an autonomous car processes is not unlike that used for virtual reality technology.

What challenges would you see for the future of High Performance Computing in Europe?

Rolf Aschenbrenner: One big problem in this field is high-performance ICs. 14 or 7-nanometer technology is simply unavailable in Europe. There are no companies that work in this range, meaning that all of the chips have to come from Asia or America, because they have the knowhow. We can buy those chips and build a system with them, but it would be a major advantage to manufacture the chips as well. Building a fab for a new generation of ICs means big investments. We are speaking of sums far in excess of 10 billion euros.

In a nutshell: We have lots of competence concerning systems in Europe, but for components, we are dependent on Asia.

Michael Töpper: Most of the companies that are growing right now are foundries, the companies that produce customer-specific ICs. Unfortunately, we do not have any in Europe for high-end ICs with geometries below 14 nm.

What ethical challenges can you see for a broader implementation of High Performance Computing?

Rolf Aschenbrenner: Our desire to integrate electronics into everything must be balanced by thinking clearly about what we want and where we want to end up as human beings. Ethical concerns are an important aspect, not only for medical technology. Ethics are always present when we talk about technology. Think about the classic case of traffic accidents: A self-driving car might have to decide whether to go left and hit three children or go right and hit eight elderly people. Things like these need to be discussed.

What is Fraunhofer IZM doing to support High Performance Computing?

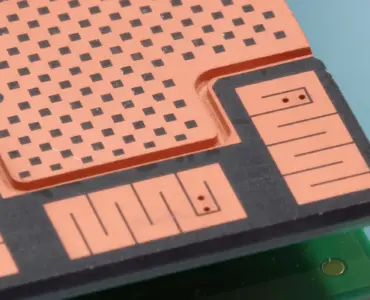

Rolf Aschenbrenner: To build high-performance computers, we need high-performance modules. We are speaking about complex Systems-in-Packages, which is also called heterogeneous integration. Very diverse components are packaged in a single module. To do this, you need special knowhow concerning fine-line structuring, thermal management, and power and signal integrity. Those are the topics we are deal with, and this is where we can contribute a lot for many possible areas. We have a PCB line which enables us to make fine structures on large-scale substrates.

Michael Töpper: The connection between chip and memory must be very short and fast. We are one of the few institutes worldwide that does ultra-fine chip connection and substrate technology on large-area formats. It is not only about speed, but also about saving power. The power required for data communication has been increasing for years, and the only way to counteract this is miniaturization, short distances, and the minimization of power losses, maybe by optical technologies.

Do you have concrete goals for the near future of High Performance Computing?

Michael Töpper: Substrate technology plays an important role: We want to test the limits of large-area organic substrates in terms of miniaturization. We are working in the range of 2 lines and spaces, and we want to get even finer, until we hit the physical limits. In semiconductor manufacturing, we will soon reach the limits of Moore’s Law and the natural end of this concept. This is why we have to look for completely new concepts, and this is what we intend to achieve with organic substrates, combined with so-called chiplets.

How does High Performance Computing relate to artificial intelligence?

Rolf Aschenbrenner: From my point of view, artificial intelligence ultimately means that there is an input of data and certain results are drawn from that data. The more complex and the larger the amount of data, the better and more reliable the results will be. For instance, neuromorphic computing allows quick and parallel calculations. New materials could be designed in a computer: You enter chemical formulas, which are then logically reassembled by the AI.

Michael Töpper: The current search for a Covid vaccine is a good example. They had a huge number of computer programs running and scanning all kinds of possibilities. This approach could be considered AI technology. But in my opinion, artificial intelligence is a term of philosophy, and it is constantly changing. ‘Real’ artificial intelligence starts when we stop noticing that it is there. The best way to use a computer is when you do not realize that you are using it. Sometimes, this is already the case: your smartphone suggests certain routes for example, because it knows where you want to go. In 30 years, there will be a different understanding of the term artificial intelligence.

Will we see high performance computers become part of everyday life?

Michael Töpper: Keep in mind: 15 years ago, there were debates about whether we would need cameras in mobile phones. There were certain high-powered executives at mobile companies calling them gimmicks. Seen from the vantage point of 2005, today’s smartphones are unimaginably high-end computers with multiple cameras and other sensors. There will be new technologies and technological features becoming routine that we are not able to think of right now.

Add comment